Oxford Mathematician Dawid Kielak talks about his joint work with Marek Kaluba and Piotr Nowak in which they used a computer* to prove a theorem about the behaviour of other computers.

"Here is a practical problem: imagine being given a very large but finite object $X$. Using your bare hands, you construct a small number of elements $g_1, \dots, g_n$ of the automorphism group $\mathrm{Aut}(X)$ (or maybe you were given those; perhaps you even know that they generate $\mathrm{Aut}(X)$). Now, let us say that given two elements of $\mathrm{Aut}(X)$ you can check how they act on $X$, and in particular you can check if these elements are the same. Your goal is to figure out which finite group $G = \langle g_1, \dots, g_n \rangle$ is.

Since you can check which words in the generators $g_1, \dots, g_n$ coincide (you can solve the word problem in $\mathrm{Aut}(X)$), you could construct the Cayley graph of $G$. But now imagine that $X$ was a $10$-dimensional vector space over the finite field $\mathbb{F}_p$ where $p = 101$. The group $\mathrm{GL}_{10}(\mathbb{F}_{101})$ has more elements than there are particles in the observable universe... so good luck with the Cayley graph!

We need a smarter trick. We will quickly construct a bunch of random elements of $G$, and try to guess what $G$ is from what we can say about these random elements. And here the adjective quickly is key. One way to construct these random elements is to use a Monte Carlo type algorithm of Laszlo Babai from 1991. This algorithm requires $\log |G|^5$ operations to generate elements that are more-or-less random. Unfortunately, $\log |G|^5$ is still too large for practical purposes. Another way of generating random elements was proposed by Charles Leedham-Green and Leonard Soicher around 1995; this is the Product Replacement algorithm which works as follows: instead of trying to randomly move around the group $G$, you try to walk around its generating sets. More specifically, what you do is you start with your tuple $(g_1, \dots, g_n)$, and you randomly multiple one of the entries on either the left or the right by another entry (or its inverse). You repeat this process many times, and at the end you select one of the entries of the last tuple at random, and this is the output element. The magic of the Product Replacement algorithm is that in practice it works extremely well. It is implemented in both GAP and MAGMA, so if you ever tried to construct a random element of a finite group using any of these programmes, you probably used Product Replacement.

And now we start to do natural science: we spend weeks sitting on a tree top observing algorithms in their natural habitat, and we notice that this Product Replacement is very efficient. What is the reason for this? A first putative answer came in 2000: Alexander Lubotzky and Igor Pak showed that the effectiveness of Product Replacement would follow from the automorphism group $\mathrm{Aut}(F_n)$ of a free group $F_n$ exhibiting Kazhdan's Property $(T)$.

Property $(T)$. Before getting to the definition, let us first ask a philosophical question: how can we understand a group? At the very beginning, starting with Évariste Galois in the 1830s, groups were collections of automorphisms of finite objects, and they were understood via their actions. Groups became abstract objects some 50 years later in the work of Arthur Cayley; and not only did they become abstract - they were now allowed to be infinite! How would you study an abstract, infinite group? It seems that it took another 50 years until Emmy Noether popularised homomorphisms as a tool for understanding groups (as well as rings and other algebraic structures). The basic idea behind using a homomorphism is to map your group to something you understand well, and then to study what information you have lost on the way (which is of course encoded in the kernel of the homomorphism). Which begs another question: what are the groups which we do understand well?

(Before answering this question, let me go on a tangent. What happens next in the development of group theory? Well, we go a full circle, and now we are back to studying actions, i.e., again we try to view groups as automorphisms of objects. Learning the lesson from Noether, we try to come up with objects to act on that we understand well; the price is that these actions are not always faithful and some information may be lost. Formally speaking, we look at homomorphisms $H \to \mathrm{Aut}(Y)$, and the obvious question which arises is: how is this different from simply studying homomorphisms? My personal opinion is that it is psychologically different: our brains, so used to thinking about who did what to whom, are well-trained in thinking about actors (elements of $H$) performing actions (on the set $Y$). I think that using this point of view allows us to tap into additional brainpower, and this is why thinking of actions is so prevalent nowadays, and so effective.)

Back to maths: what sort of groups do we understand well? Automorphisms of objects which we understand even better - vector spaces of finite dimension. This line of thought, understanding groups by mapping them to groups of matrices over fields, is called representation theory. It is a classical and fantastically useful piece of mathematics, particularly helpful in trying to understand finite groups.

When the groups are infinite, acting on finite dimensional vector spaces might not be enough. So we relax the assumptions, and now try to look at actions on infinite dimensional vector spaces. But that is way too general to be useful, and a convenient middle ground is to look at actions on Hilbert spaces by linear isometries (these are unitary representations). Just to remind you - Hilbert spaces may be infinite dimensional, but they have a notion of an angle between two crossing lines, and this makes their geometry more tractable.

To define property $(T)$ we need to be slightly more general and consider actions on Hilbert spaces by isometries which are not necessarily linear, which amounts to saying that they do not need to fix the $0$ of the Hilbert space. Now we are ready: a group has property if and only if its every action by isometries on a Hilbert space fixes a point. Roughly speaking, this means that the group has no actions on Hilbert spaces besides the unitary representations.

Automorphisms of $F_n$. It is time for the last of the dramatis personae. The insight of Lubotzky and Pak was that if you want to explain a phenomenon happening to a random walk among generating sets of $G$ for every finite $G$, you should look at the same random walk among generators of the free group. The interesting point here is that if you take $a_1, \dots, a_n$ to be a generating set of the free group $F_n$ of rank $n$, then every operation performed by the Product Replacement algorithm is equal to evaluating an automorphism of $F_n$ on every element in the tuple. And now we are really studying a random walk in $\mathrm{Aut}(F_n)$! Random walks on groups with property $(T)$ mix very rapidly, which means that already few operations performed by the Product Replacement algorithm get you very close to a random element.

This was the state of affairs in 2000. It was known that $\mathrm{Aut}(F_1) \cong \mathbb{Z} / 2 \mathbb{Z}$ has property $(T)$ for trivial reasons; $\mathrm{Aut}(F_2) \cong \mathrm{GL}_2(\mathbb{Z})$ and $\mathrm{Aut}(F_3)$ were known not to have property $(T)$. It was also known that $\mathrm{GL}_m(\mathbb{Z})$ has property $(T)$ if and only if $m\geqslant 3$, so the fact that for small values of $n$ the groups $\mathrm{Aut}(F_n)$ (which are in many ways analogous to $\mathrm{GL}_n(\mathbb{Z})$) did not have property $(T)$ was not necessarily seen as a big problem. And there was the experimentally confirmed but theoretically mysterious effectiveness of Product Replacement.

The first signs of a breakthrough appeared in March 2017. Marek Kaluba and Piotr Nowak used a new approach designed by Narutaka Ozawa to prove property $(T)$ for $\mathrm{GL}_n(\mathbb{Z})$ with $n \in \{3,4,5\}$ by solving a very large optimisation problem on a computer. Their method was potentially applicable to $\mathrm{Aut(F_n)}$, and indeed in December 2017 Kaluba-Nowak-Ozawa proved property $(T)$ for $\mathrm{Aut(F_5)}$.

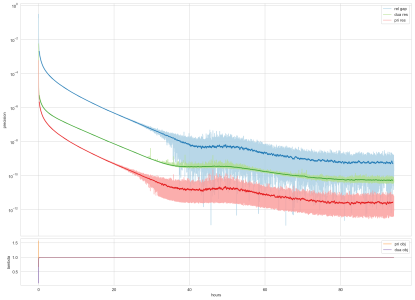

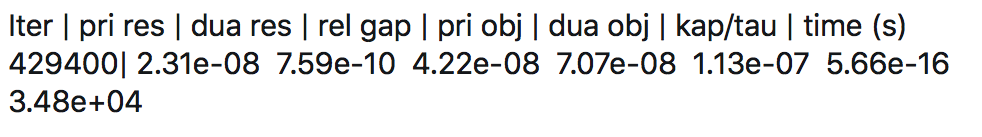

I joined the project in summer 2018. In September we devised a strategy which reduced the infinite collection of questions, "Does $\mathrm{Aut(F_n)}$ have property $(T)$?", indexed by natural numbers, to a single question: "Can a special element of the group ring $\mathbb Z \mathrm{Aut(F_5)}$ be written as a sum of Hermitian squares?". This is the sort of question that one can ask a computer about: the machine will attempt to find the desired sum of Hermitian squares. And that is what we did. Every 6 minutes the computer would produce a result like this:

We set up a twitter account which would tweet the outcomes, and for a couple of weeks looking at these numbers was my favourite pastime. It was the first thing I checked in the morning, and the last before I went to bed. And then on the 12th of October the computation was finished: together with Kaluba and Nowak, we established Property $(T)$ for $\mathrm{Aut}(F_n)$ for every $n>5$. (The solution to the remaining case of $\mathrm{Aut(F_4)}$ was recently announced by Martin Nitsche.)

In December 2018 we put the preprint on the arXiv. It was the end of this project, but "a beginning of a beautiful friendship'': we have been collaborating with Marek and Piotr ever since."

* For those who are interested here is the accompanying code which comes with detailed instructions on how to run it; one can run the code on a laptop and prove something very close to our main theorem in about 2 hours.