11:00

Further birational non-expansion

Abstract

17:00

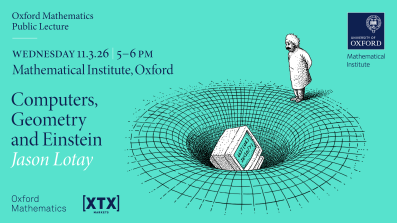

Computers, Geometry and Einstein - Jason Lotay

Computers have long been useful for studying mathematical problems. But recently computer techniques have been used to prove new theorems in geometry, specifically related to the study of gravity through Einstein's theory of General Relativity. This talk will describe these developments and what they might mean for the future.

Jason Lotay is Professor of Mathematics in the Mathematical Institute at the University of Oxford, and one of the inaugural Fellows of the Academy of Mathematical Sciences.

Please email @email to register to attend in person.

The lecture will be broadcast on the Oxford Mathematics YouTube Channel on Wednesday 25 March at 5-6 pm and any time after (no need to register for the online version).

The Oxford Mathematics Public Lectures are generously supported by XTX Markets.

13:15

Persistent Cycle Representatives and Generalized Persistence Landscapes in Codimension 1

Abstract

A common challenge in persistent homology is choosing "good" representative cycles for homology classes in a way compatible with persistence. In this talk, we discuss a geometric framework for codimension-1 persistent homology that addresses this issue using Alexander duality.

For an embedded filtered simplicial complex, connected components of the complement induce cycle representatives for a homology basis. The evolution of these cycles along the filtration can be tracked via the merge tree of the complement and the elder rule. This leads to the notion of cycle progression barcodes, associating to each persistence interval a sequence of representative cycles evolving through the filtration.

Applying geometric functionals to these progressions produces generalized persistence landscapes, which extend classical persistence landscapes and allow geometric information about cycle representatives to be captured without fixing a single filtration value. This provides a way to distinguish data sets with similar persistent homology but different geometric structure.