16:00

Forthcoming events in this series

16:00

04:00

16:00

Fridays@4 – From research to market: lessons from an academic founder

Abstract

Please join us for a fireside chat, hosted by OSE, between PQShield founder and visiting professor, Dr Ali El Kaafarani, and Sami Walter, associate at Oxford Sciences Enterprises (OSE).

Dr Ali El Kaafarani is the founder and CEO of PQShield, a post-quantum cryptography (PQC) company empowering organisations, industries and nations with quantum-resistant cryptography that is modernising the vital security systems and components of the world's technology supply chain.

In this chat, we’ll discuss Dr Ali El Kaafarani’s experience founding PQShield and lessons learned from spinning a company out from the Oxford ecosystem.

13:00

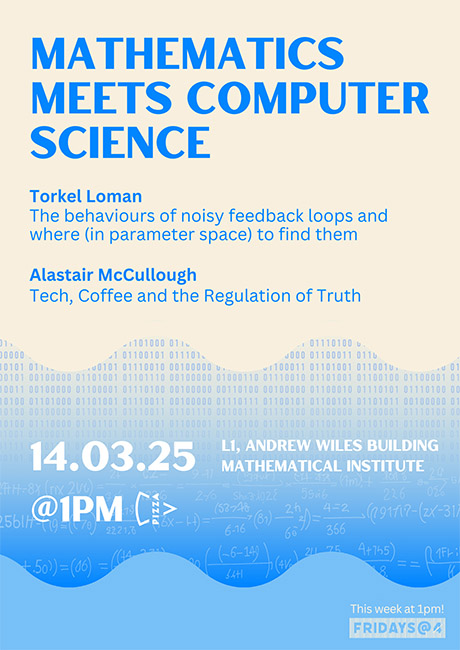

Mathematics meets Computer Science

Abstract

In this Fridays@4 event – for this week renamed Fridays@1 (with lunchtime pizza) – Torkel Loman from the Mathematics Institute and Alastair McCullough from the Department of Computer Science will present their talks.

Torkel Loman

The behaviours of noisy feedback loops and where (in parameter space) to find them

Alastair McCullough

Tech, Coffee, and the Regulation of Truth: An Enterprise Barista's Story

Torkel's abstract

Mixed positive/negative feedback loops (networks where a single component both activates and deactivates its own productions) are common across biological systems, and also the subject of this talk. Here (inspired by systems for e.g. bacterial antibiotics resistance), we create a minimal mathematical model of such a feedback loop. Our model (a stochastic delay differential equation) depends on only 6, biologically interpretable, parameters. We describe 10 distinct behaviours that such feedback loops can produce, and map their occurrence across 6-dimensional parameter space.

16:00

Fridays@4 – Multiply Your Impact: Talking to the Public Creatively

Abstract

16:00

Fridays@4 – A start-up company? 10 things I wish I had known

Abstract

Are you thinking of launching your own start-up or considering joining an early-stage company? Navigating the entrepreneurial landscape can be both exciting and challenging. Join Pete for an interactive exploration of the unwritten rules and hidden insights that can make or break a start-up journey.

Drawing from personal experience, Pete's talk will offer practical wisdom for aspiring founders and team members, revealing the challenges and opportunities of building a new business from the ground up.

Whether you're an aspiring entrepreneur, a potential start-up team member, or simply curious about innovative businesses, you'll gain valuable perspectives on the realities of creating something from scratch.

This isn't a traditional lecture – it will be a lively conversation that invites participants to learn, share, and reflect on the world of start-ups. Come prepared to challenge your assumptions and discover practical insights that aren't found in standard business guides.

Speaker: Professor Pete Grindrod

16:00

Fridays@4 – Trading Options: Predicting the Future in More Ways Than One

Abstract

In the fast-paced world of trading, where exabytes of data and advanced mathematical models offer powerful insights, how do you harness these to anticipate market shifts and evolving prices? Numbers alone only tell part of the story. Beneath the surface lies the unpredictable force of human behaviour – the delicate balance of buyers and sellers shaping the market’s course.

In this talk, we’ll uncover how these forces intertwine, revealing insights that not only harness data but challenge conventional thinking about the future of trading.

Speaker: Chris Horrobin (Head of European and US people development for Optiver)

Speaker bio

Chris Horrobin is Head of European and US people development for Optiver. Chris started his career trading US and German bond options, adding currency and European index options into the mix before moving to focus primarily on index options. Chris spent his first three years in Amsterdam before transferring to Sydney.

During these years, Chris traded some of the biggest events of his career including Brexit and Trump (first time around) and before moving back to Europe led the positional team in his last year. Chris then moved out of trading and into our training team running our trading education space for four years, owning both the design and execution of our renowned internship and grad programs.

The Education Team at Optiver is central to the Optiver culture and focus on growth – both of employees and the company. Chris has now extended his remit to cover the professional development of hires throughout the business.

16:00

North meets South: ECR Colloquium

Abstract

North meets South is a tradition founded by and for early-career researchers. One speaker from the North of the Andrew Wiles Building and one speaker from the South each present an idea from their work in an accessible yet intriguing way.

North Wing

Speaker: Paul-Hermann Balduf

Title: Statistics of Feynman integral

Abstract: In quantum field theory, one way to compute predictions for physical observables is perturbation theory, which means that the sought-after quantity is expressed as a formal power series in some coupling parameter. The coefficients of the power series are Feynman integrals, which are, in general, very complicated functions of the masses and momenta involved in the physical process. However, there is also a complementary difficulty: A higher orders, millions of distinct Feynman integrals contribute to the same series coefficient.

My talk concerns the statistical properties of Feynman integrals, specifically for phi^4 theory in 4 dimensions. I will demonstrate that the Feynman integrals under consideration follow a fairly regular distribution which is almost unchanged for higher orders in perturbation theory. The value of a given Feynman integral is correlated with many properties of the underlying Feynman graph, which can be used for efficient importance sampling of Feynman integrals. Based on 2305.13506 and 2403.16217.

South Wing

Speaker: Marc Suñé

Title: Extreme mechanics of thin elastic objects

Abstract: Exceptionally hard --- or soft -- materials, materials that are active and response to different stimuli, elastic objects that undergo large deformations; the advances in the recent decades in robotics, 3D printing and, more broadly, in materials engineering, have created a new world of opportunities to test the (extreme) mechanics of solids.

In this colloquium I will focus on the elastic instabilities of slender objects. In particular, I will discuss the transverse actuation of a stretched elastic sheet. This problem is a peculiar example of buckling under tension and it has a vast potential scope of applications, from understanding the mechanics of graphene and cell tissues, to the engineering of meta-materials.

16:00

Departmental Colloquium: From Group Theory to Post-quantum Cryptography (Delaram Kahrobaei)

Abstract

The goal of Post-Quantum Cryptography (PQC) is to design cryptosystems which are secure against classical and quantum adversaries. A topic of fundamental research for decades, the status of PQC drastically changed with the NIST PQC standardization process. Recently there have been AI attacks on some of the proposed systems to PQC. In this talk, we will give an overview of the progress of quantum computing and how it will affect the security landscape.

Group-based cryptography is a relatively new family in post-quantum cryptography, with high potential. I will give a general survey of the status of post-quantum group-based cryptography and present some recent results.

In the second part of my talk, I speak about Post-quantum hash functions using special linear groups with implication to post-quantum blockchain technologies.

16:00

Departmental Colloquium: Fluid flow and elastic flexure – mathematical modelling of the transient response of ice sheets in a changing climate (Jerome Neufield) CANCELLED

Abstract

CANCELLED DUE TO ILLNESS

The response of the Greenland and Antarctic ice sheets to a changing climate is one of the largest sources of uncertainty in future sea level predictions. The behaviour of the subglacial environment, where ice meets hard rock or soft sediment, is a key determinant in the flux of ice towards the ocean, and hence the loss of ice over time. Predicting how ice sheets respond on a range of timescales brings together mathematical models of the elastic and viscous response of the ice, subglacial sediment and water and is a rich playground where the simplified models of the contact between ice, rock and ocean can shed light on very large scale questions. In this talk we’ll see how these simplified models can make sense of a variety of field and laboratory data in order to understand the dynamical phenomena controlling the transient response of large ice sheets.

16:00

3 Minute Thesis competition

Abstract

16:00

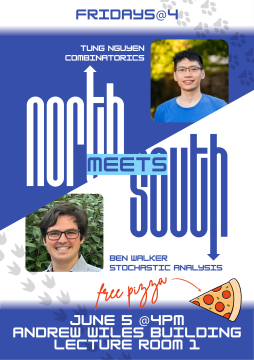

North meets South

Abstract

There will be free pizza provided for all attendees directly after the event just outside L1, so please do come along!

North Wing

Speaker: Alexandru Pascadi

Title: Points on modular hyperbolas and sums of Kloosterman sums

Abstract: Given a positive integer c, how many integer points (x, y) with xy = 1 (mod c) can we find in a small box? The dual of this problem concerns bounding certain exponential sums, which show up in methods from the spectral theory of automorphic forms. We'll explore how a simple combinatorial trick of Cilleruelo-Garaev leads to good bounds for these sums; following recent work of the speaker, this ultimately has consequences about multiple problems in analytic number theory (such as counting primes in arithmetic progressions to large moduli, and studying the greatest prime factors of quadratic polynomials).

South Wing

Speaker: Tim LaRock

Title: Encapsulation Structure and Dynamics in Hypergraphs

Abstract: Within the field of Network Science, hypergraphs are a powerful modelling framework used to represent systems where interactions may involve an arbitrary number of agents, rather than exactly two agents at a time as in traditional network models. As part of a recent push to understand the structure of these group interactions, in this talk we will explore the extent to which smaller hyperedges are subsets of larger hyperedges in real-world and synthetic hypergraphs, a property that we call encapsulation. Building on the concept of line graphs, we develop measures to quantify the relations existing between hyperedges of different sizes and, as a byproduct, the compatibility of the data with a simplicial complex representation–whose encapsulation would be maximum. Finally, we will turn to the impact of the observed structural patterns on diffusive dynamics, focusing on a variant of threshold models, called encapsulation dynamics, and demonstrate that non-random patterns can accelerate spreading through the system.

16:00

Talks on Talks

Abstract

What makes a good talk? This year, graduate students and postdocs will give a series talks on how to give talks! There may even be a small prize for the audience’s favourite.

If you’d like to have a go at informing, entertaining, or just have an axe to grind about a particularly bad talk you had to sit through, we’d love to hear from you (you can email Ric Wade or ask any of the organizers).

16:00

Maths meets Stats

Abstract

Speaker: Mattia Magnabosco (Newton Fellow, Maths)

Title: Synthetic Ricci curvature bounds in sub-Riemannian manifolds

Abstract: In Riemannian manifolds, a uniform bound on the Ricci curvature tensor allows to control the volume growth along the geodesic flow. Building upon this observation, Lott, Sturm and Villani introduced a synthetic notion of curvature-dimension bounds in the non-smooth setting of metric measure spaces. This condition, called CD(K,N), is formulated in terms of the optimal transport interpolation of measures and consists in a convexity property of the Rényi entropy functionals along Wasserstein geodesics. The CD(K,N) condition represents a lower Ricci curvature bound by K and an upper bound on the dimension by N, and it is coherent with the smooth setting, as in a Riemannian manifold it is equivalent to a lower bound on the Ricci curvature tensor. However, the same relation between curvature and CD(K,N) condition does not hold for sub-Riemannian (and sub-Finsler) manifolds.

Speaker: Rebecca Lewis (Florence Nightingale Bicentenary Fellow, Stats)

Title: High-dimensional statistics

Abstract: Due to the increasing ease with which we collect and store information, modern data sets have grown in size. Whilst these datasets have the potential to yield new insights in a variety of areas, extracting useful information from them can be difficult. In this talk, we will discuss these challenges.

15:30

Inaugural Green Lecture: Tackling the hidden costs of computational science: GREENER principles for environmentally sustainable research

Abstract

From genetic studies and astrophysics simulations to statistical modelling and AI, scientific computing has enabled amazing discoveries and there is no doubt it will continue to do so. However, the corresponding environmental impact is a growing concern in light of the urgency of the climate crisis, so what can we all do about it? Tackling this issue and making it easier for scientists to engage with sustainable computing is what motivated the Green Algorithms project. Through the prism of the GREENER principles for environmentally sustainable science, we will discuss what we learned along the way, how to estimate the impact of our work and what levers scientists and institutions have to make their research more sustainable. We will also debate what hurdles exist and what is still needed moving forward.

PLEASE REGISTER FOR THE EVENT HERE: https://www.stats.ox.ac.uk/events/inaugural-green-lecture-dr-loic-lanne…

Dr Loïc Lannelongue is a Research Associate in Biomedical Data Science in the Heart and Lung Research Institute at the University of Cambridge, UK, and the Cambridge-Baker Systems Genomics Initiative. He leads the Green Algorithms project, an initiative promoting more environmentally sustainable computational science. His research interests also include radiogenomics, i.e. combining medical imaging and genetic information with machine learning to better understand and treat cardiovascular diseases. He obtained an MSc from ENSAE, the French National School of Statistics, and an MSc in Statistical Science from the University of Oxford, before doing his PhD in Health Data Science at the University of Cambridge. He is a Software Sustainability Institute Fellow, a Post-doctoral Associate at Jesus College, Cambridge, and an Associate Fellow of the Higher Education Academy.

16:00

Maths meets Stats

Abstract

Speaker: James Taylor

Title: D-Modules and p-adic Representations

Abstract: The representation theory of finite groups is a beautiful and well-understood subject. However, when one considers more complicated groups things become more interesting, and to classify their representations is often a much harder problem. In this talk, I will introduce the classical theory, the particular groups I am interested in, and explain how one might hope to understand their representations through the use of D-modules - the algebraic incarnation of differential equations.

Speaker: Anthony Webster

Title: An Introduction to Epidemiology and Causal Inference

Abstract: This talk will introduce epidemiology and causal inference from the perspective of a statistician and former theoretical physicist. Despite their studies being underpinned by deep and often complex mathematics, epidemiologists are generally more concerned by seemingly mundane information about the relationships between potential risk factors and disease. Because of this, I will argue that a good epidemiologist with minimal statistical knowledge, will often do better than a highly trained statistician. I will also argue that causal assumptions are a necessary part of epidemiology, should be made more explicitly, and allow a much wider range of causal inferences to be explored. In the process, I will introduce ideas from epidemiology and causal inference such as Mendelian Randomisation and the "do calculus", methodological approaches that will increasingly underpin data-driven population research.

16:00

Departmental Colloquium: The role of depth in neural networks: function space geometry and learnability

Abstract

Neural network architectures play a key role in determining which functions are fit to training data and the resulting generalization properties of learned predictors. For instance, imagine training an overparameterized neural network to interpolate a set of training samples using weight decay; the network architecture will influence which interpolating function is learned.

In this talk, I will describe new insights into the role of network depth in machine learning using the notion of representation costs – i.e., how much it “costs” for a neural network to represent some function f. Understanding representation costs helps reveal the role of network depth in machine learning. First, we will see that there is a family of functions that can be learned with depth-3 networks when the number of samples is polynomial in the input dimension d, but which cannot be learned with depth-2 networks unless the number of samples is exponential in d. Furthermore, no functions can easily be learned with depth-2 networks while being difficult to learn with depth-3 networks.

Together, these results mean deeper networks have an unambiguous advantage over shallower networks in terms of sample complexity. Second, I will show that adding linear layers to a ReLU network yields a representation cost that favors functions with latent low-dimension structure, such as single- and multi-index models. Together, these results highlight the role of network depth from a function space perspective and yield new tools for understanding neural network generalization.

Rebecca Willett is a Professor of Statistics and Computer Science & the Faculty Director of AI at the Data Science Institute, with a courtesy appointment at the Toyota Technological Institute at Chicago. Her research is focused on machine learning foundations, scientific machine learning, and signal processing. She is the Deputy Director for Research at the NSF-Simons Foundation National Institute for Theory and Mathematics in Biology and a member of the Executive Committee for the NSF Institute for the Foundations of Data Science. She is the Faculty Director of the Eric and Wendy Schmidt AI in Science Postdoctoral Fellowship and helps direct the Air Force Research Lab University Center of Excellence on Machine Learning

16:00

Demystifying careers for mathematicians in the Civil Service

Abstract

Sarah Livermore has worked in the Civil Service for over 10 years, using the maths skills gained in her physics degrees (MPhys, DPhil) whilst studying at Oxford. In this session she’ll discuss some of the roles available to people with a STEM background in the Civil Service, a ‘day in the life’ of a civil servant, typical career paths and how to apply.

16:00

Conferences and networking

Abstract

Conferences and networking are important parts of academic life, particularly early in your academic career. But how do you make the most out of conferences? And what are the does and don'ts of networking? Learn about the answers to these questions and more in this panel discussion by postdocs from across the Mathematical Institute.

16:00

Creating Impact for Maths Research via Consulting, Licensing and Spinouts

Abstract

Oxford University Innovation, the University’s commercialisation team, will explain the support they can give to Maths researchers who want to generate commercial impact from their work and expertise. In addition to an overview of consulting, this talk will explain how mathematical techniques and software can be protected and commercialised.

16:00

Graduate Jobs in finance and the recruitment process

Abstract

Join us for a session with Keith Macksoud, Executive Director at global recruitment consultant Options Group in London and who previously has > 20 years’ experience in Prime Brokerage Sales at Morgan Stanley, Citi, and Deutsche Bank. Keith will discuss the recruitment process for financial institutions, and how to increase your chances of a successful application.

Keith will detail his finance background in Prime Brokerage and provide students with an exclusive look behind the scenes of executive search and strategic consulting firm Options Group. We will look at what Options Group does, how executive search firms work and the Firm’s 30-year track record of placing individuals at many of the industries’ largest and most successful global investment banks, investment managers and other financial services-related organisations.

About Options Group

Options Group is a leading global executive search and strategic consulting firm specializing in financial services including capital markets, global markets, alternative investments, hedge funds, and private banking/wealth management.

16:00

North meets South

Abstract

Speaker: Cedric Pilatte

Title: Convolution of integer sets: a galaxy of (mostly) open problems

Title: Manifold-Free Riemannian Optimization

Abstract: Optimization problems constrained to a smooth manifold can be solved via the framework of Riemannian optimization. To that end, a geometrical description of the constraining manifold, e.g., tangent spaces, retractions, and cost function gradients, is required. In this talk, we present a novel approach that allows performing approximate Riemannian optimization based on a manifold learning technique, in cases where only a noiseless sample set of the cost function and the manifold’s intrinsic dimension are available.

16:00

Mathematical Societies and Organisations

Abstract

16:00

Departmental Colloquium: Ana Caraiani

Abstract

Title: Elliptic curves and modularity

Abstract: The goal of this talk is to give you a glimpse of the Langlands program, a central topic at the intersection of algebraic number theory, algebraic geometry and representation theory. I will focus on a celebrated instance of the Langlands correspondence, namely the modularity of elliptic curves. In the first part of the talk, I will give an explicit example, discuss the different meanings of modularity for rational elliptic curves, and mention applications. In the second part of the talk, I will discuss what is known about the modularity of elliptic curves over more general number fields.

16:00

Maths meets Stats

Abstract

Speaker: Ximena Laura Fernandez

Title: Let it Be(tti): Topological Fingerprints for Audio Identification

Abstract: Ever wondered how music recognition apps like Shazam work or why they sometimes fail? Can Algebraic Topology improve current audio identification algorithms? In this talk, I will discuss recent collaborative work with Spotify, where we extract low-dimensional homological features from audio signals for efficient song identification despite continuous obfuscations. Our approach significantly improves accuracy and reliability in matching audio content under topological distortions, including pitch and tempo shifts, compared to Shazam.

Talk based on the work: https://arxiv.org/pdf/2309.03516.pdf

Speaker: Brett Kolesnik

Title: Coxeter Tournaments

Abstract: We will present ongoing joint work with three Oxford PhD students: Matthew Buckland (Stats), Rivka Mitchell (Math/Stats) and Tomasz Przybyłowski (Math). We met last year as part of the course SC9 Probability on Graphs and Lattices. Connections with geometry (the permutahedron and generalizations), combinatorics (tournaments and signed graphs), statistics (paired comparisons and sampling) and probability (coupling and rapid mixing) will be discussed.

16:00

Careers outside academia

Abstract

What opportunities are available outside of academia? What skills beyond strong academic background are companies looking for to be successful in transitioning to industry? Come along and hear from video technology company V-Nova and Dr Anne Wolfes from the Careers Service to get some invaluable advice on careers outside academia.

16:00

North meets South

Abstract

Speaker: Lasse Grimmelt (North Wing)

Title: Modular forms and the twin prime conjecture

Abstract: Modular forms are one of the most fruitful areas in modern number theory. They play a central part in Wiles proof of Fermat's last theorem and in Langland's far reaching vision. Curiously, some of our best approximations to the twin-prime conjecture are also powered by them. In the existing literature this connection is highly technical and difficult to approach. In work in progress on this types of questions, my coauthor and I found a different perspective based on a quite simple idea. In this way we get an easy explanation and good intuition why such a connection should exists. I will explain this in this talk.

Speaker: Yang Liu (South Wing)

Title: Obtaining Pseudo-inverse Solutions With MINRES

Abstract: The celebrated minimum residual method (MINRES) has seen great success and wide-spread use in solving linear least-squared problems involving Hermitian matrices, with further extensions to complex symmetric settings. Unless the system is consistent whereby the right-hand side vector lies in the range of the matrix, MINRES is not guaranteed to obtain the pseudo-inverse solution. We propose a novel and remarkably simple lifting strategy that seamlessly integrates with the final MINRES iteration, enabling us to obtain the minimum norm solution with negligible additional computational costs. We also study our lifting strategy in a diverse range of settings encompassing Hermitian and complex symmetric systems as well as those with semi-definite preconditioners.

16:00

Departmental Colloquium (Alicia Dickenstein) - Algebraic geometry tools in systems biology

Abstract

In recent years, methods and concepts of algebraic geometry, particularly those of real and computational algebraic geometry, have been used in many applied domains. In this talk, aimed at a broad audience, I will review applications to molecular biology. The goal is to analyze standard models in systems biology to predict dynamic behavior in regions of parameter space without the need for simulations. I will also mention some challenges in the field of real algebraic geometry that arise from these applications.

Alicia Dickenstein is an Argentine mathematician known for her work on algebraic geometry, particularly toric geometry, tropical geometry, and their applications to biological systems.

16:00

Academic job application workshop

Abstract

Job applications involve a lot of work and can be overwhelming. Join us for a workshop and Q+A session focused on breaking down academic applications: we’ll talk about approaching reference letter writers, writing research statements, and discussing what makes a great CV and covering letter.

16:00

Departmental Colloquium (Tamara Kolda) - Generalized Tensor Decomposition: Utility for Data Analysis and Mathematical Challenges

Abstract

16:00

You and Your Supervisor

Abstract

How do you make the most of graduate supervisions? Whether you are a first year graduate wanting to learn about how to manage meetings with your supervisor, or a later year DPhil student, postdoc or faculty member willing to share their experiences and give advice, please come along to this informal discussion led by DPhil students for the first Fridays@4 session of the term. You can also continue the conversation and learn more about graduate student life at Oxford at Happy Hour afterwards.

16:00

Departmental Colloquium

George Lusztig is the Abdun-Nur Professor of Mathematics. He joined the MIT mathematics faculty in 1978 following a professorship appointment at the University of Warwick, 1974-77. He was appointed Norbert Wiener Professor at MIT 1999-2009.

Lusztig graduated from the University of Bucharest in 1968, and received both the M.A. and Ph.D. from Princeton University in 1971 under the direction of Michael Atiyah and William Browder. Professor Lusztig works on geometric representation theory and algebraic groups. He has received numerous research distinctions, including the Berwick Prize of the London Mathematical Society (1977), the AMS Cole Prize in Algebra (1985), and the Brouwer Medal of the Dutch Mathematical Society (1999), and the AMS Leroy P. Steele Prize for Lifetime Achievement (2008), "for entirely reshaping representation theory, and in the process changing much of mathematics."

Professor Lusztig is a Fellow of the Royal Society (1983), Fellow of the American Academy of Arts & Sciences (1991), and Member of the National Academy of Sciences (1992). He was the recipient of the Shaw Prize (2014) and the Wolf Prize (2022).

16:00

North meets South

Abstract

North Wing talk: Dr Thomas Karam

Title: Ranges control degree ranks of multivariate polynomials on finite prime fields.

Abstract: Let $p$ be a prime. It has been known since work of Green and Tao (2007) that if a polynomial $P:\mathbb{F}_p^n \mapsto \mathbb{F}_p$ with degree $2 \le d \le p-1$ is not approximately equidistributed, then it can be expressed as a function of a bounded number of polynomials each with degree at most $d-1$. Since then, this result has been refined in several directions. We will explain how this kind of statement may be used to deduce an analogue where both the assumption and the conclusion are strengthened: if for some $1 \le t < d$ the image $P(\mathbb{F}_p^n)$ does not contain the image of a non-constant one-variable polynomial with degree at most $t$, then we can obtain a decomposition of $P$ in terms of a bounded number of polynomials each with degree at most $\lfloor d/(t+1) \rfloor$. We will also discuss the case where we replace the image $P(\mathbb{F}_p^n)$ by for instance $P(\{0,1\}^n)$ in the assumption.

South Wing talk: Dr Hamid Rahkooy

Title: Toric Varieties in Biochemical Reaction Networks

Abstract: Toric varieties are interesting objects for algebraic geometers as they have many properties. On the other hand, toric varieties appear in many applications. In particular, dynamics of many biochemical reactions lead to toric varieties. In this talk we discuss how to test toricity algorithmically, using computational algebra methods, e.g., Gröbner bases and quantifier elimination. We show experiments on real world models of reaction networks and observe that many biochemical reactions have toric steady states. We discuss complexity bounds and how to improve computations in certain cases.

16:00

OUI: Consultancy 101

Abstract

Come to this session to learn how to get started in consultancy from Dawn Gordon at Oxford University Innovation (OUI). After an introduction to what consultancy is, we'll explore case studies of consultancy work performed by mathematicians and statisticians within the university. This session will also include practical advice on how you can explore consultancy opportunities alongside your research work, from finding potential clients to the support that OUI can offer.

16:00

Looking after our mental health in an academic environment

Abstract

To tie in with mental health awareness week, in this session we'll give a brief overview of the mental health support available through the department and university, followed by a panel discussion on how we can look after our mental health as in an academic setting. We're pleased that several of our department Mental Health First Aiders will be panellists - come along for hints and tips on maintaining good mental health and supporting your colleagues and friends.

16:00

SIAM Student Chapter: 3-minute thesis competition

Abstract

For week 4's @email session we welcome the SIAM-IMA student chapter, running their annual Three Minute Thesis competition.

The Three Minute Thesis competition challenges graduate students to present their research in a clear and engaging manner within a strict time limit of three minutes. Each presenter will be allowed to use only one static slide to support their presentation, and the panel of esteemed judges (details TBC) will evaluate the presentations based on criteria such as clarity, pacing, engagement, enthusiasm, and impact. Each presenter will receive a free mug and there is £250 in cash prizes for the winners. If you're a graduate student, sign up here (https://oxfordsiam.com/3mt) by Friday of week 3 to take part! And if not, come along to support your DPhil friends and colleagues, and to learn about the exciting maths being done by our research students.

16:00

Departmental Colloquium: Liliana Borcea

Abstract

Title: When data driven reduced order modelling meets full waveform inversion

Abstract:

This talk is concerned with the following inverse problem for the wave equation: Determine the variable wave speed from data gathered by a collection of sensors, which emit probing signals and measure the generated backscattered waves. Inverse backscattering is an interdisciplinary field driven by applications in geophysical exploration, radar imaging, non-destructive evaluation of materials, etc. There are two types of methods:

(1) Qualitative (imaging) methods, which address the simpler problem of locating reflective structures in a known host medium.

(2) Quantitative methods, also known as velocity estimation.

Typically, velocity estimation is formulated as a PDE constrained optimization, where the data are fit in the least squares sense by the wave computed at the search wave speed. The increase in computing power has lead to growing interest in this approach, but there is a fundamental impediment, which manifests especially for high frequency data: The objective function is not convex and has numerous local minima even in the absence of noise.

The main goal of the talk is to introduce a novel approach to velocity estimation, based on a reduced order model (ROM) of the wave operator. The ROM is called data driven because it is obtained from the measurements made at the sensors. The mapping between these measurements and the ROM is nonlinear, and yet the ROM can be computed efficiently using methods from numerical linear algebra. More importantly, the ROM can be used to define a better objective function for velocity estimation, so that gradient based optimization can succeed even for a poor initial guess.

Liliana Borcea is the Peter Field Collegiate Professor of Mathematics at the University of Michigan. Her research interests are in scientific computing and applied mathematics, including the scattering and transport of electromagnetic waves.

15:30

Joint Maths and Stats Colloquium: Understanding neural networks and quantification of their uncertainty via exactly solvable models

Abstract

The affinity between statistical physics and machine learning has a long history. Theoretical physics often proceeds in terms of solvable synthetic models; I will describe the related line of work on solvable models of simple feed-forward neural networks. I will then discuss how this approach allows us to analyze uncertainty quantification in neural networks, a topic that gained urgency in the dawn of widely deployed artificial intelligence. I will conclude with what I perceive as important specific open questions in the field.

The Lecture will be followed by a Drinks Reception in the ground floor social area. To help with catering arrangements, please book your place here https://forms.office.com/e/Nw3qSZtzCs.

Lenka Zdeborová is a Professor of Physics and Computer Science at École Polytechnique Fédérale de Lausanne, where she leads the Statistical Physics of Computation Laboratory. She received a PhD in physics from University Paris-Sud and Charles University in Prague in 2008. She spent two years in the Los Alamos National Laboratory as the Director's Postdoctoral Fellow. Between 2010 and 2020, she was a researcher at CNRS, working in the Institute of Theoretical Physics in CEA Saclay, France. In 2014, she was awarded the CNRS bronze medal; in 2016 Philippe Meyer prize in theoretical physics and an ERC Starting Grant; in 2018, the Irène Joliot-Curie prize; in 2021, the Gibbs lectureship of AMS and the Neuron Fund award. Lenka's expertise is in applications of concepts from statistical physics, such as advanced mean field methods, the replica method and related message-passing algorithms, to problems in machine learning, signal processing, inference and optimization. She enjoys erasing the boundaries between theoretical physics, mathematics and computer science.

16:00

Pathways to independent research: fellowships and grants.

Abstract

Join us for our first Fridays@4 session of Trinity about different academic routes people take post-PhD, with a particular focus on fellowships and grants. We’ll hear from Jason Lotay about his experiences on both sides of the application process, as well as hear about the experiences of ECRs in the South Wing, North Wing, and Statistics. Towards the end of the hour we’ll have a Q+A session with the whole panel, where you can ask any questions you have around this topic!

16:00

Opportunities Outside of Academia and Navigating the Transition to Industry - Modelling Climate Change at RMS Moody's

Abstract

Dr. Keven Roy from RMS Moody's Analytics (who are currently hiring!) will share his experience of transitioning from academia to industry, discussing his fascinating work in modelling climate change and how his mathematical background has helped him succeed in industry. After the approximately 20-minute talk, we'll hold a Q&A session to discuss the importance of considering industry in your job search. Aimed primarily at PhD students and postdocs, this session will explore options beyond academia that can provide a fulfilling career (as well as good work-life balance, and financial compensation!).

Join us for a thought-provoking discussion at Fridays@4 to expand your career horizons and help you make informed decisions about your future. There will be lots of time for Q&A in this session, but if you have questions for Keven you can also send them in advance to Jess Crawshaw (session organiser) - @email.

16:00

What makes a good academic discussion? A panel event

Abstract

Chair: Ian Hewitt (Associate HoD (People))

Panel:

James Sparks (Head of Department)

Helen Byrne (winner of MPLS Outstanding Supervisor Awards for 2022)

Ali Goodall (Head of Faculty Services and HR)

Matija Tapuskovic (EPSRC Postdoctoral Research Fellow and JRF at Corpus Christi)

Scientific discussions with colleagues, at conferences and seminars, during supervisions and collaborations, are a crucial part of our research process. How can we ensure our academic discussions are fruitful, respectful, and a positive experience for everyone involved? What factors and power dynamics can impact our conversations? How can we make sure everyone’s voice is heard and respected? This panel discussion will probe these questions and encourage us all to reflect on how we approach our academic discussions.

16:00

North meets South Colloquium

Abstract

Speaker: Dr Aleksander Horawa (North Wing)

Title: Bitcoin, elliptic curves, and this building

Abstract:

We will discuss two motivations to work on Algebraic Number Theory: applications to cryptography, and fame and fortune. For the first, we will explain how Bitcoin and other companies use Elliptic Curves to digitally sign messages. For the latter, we will introduce two famous problems in Number Theory: Fermat's Last Theorem, worth a name on this building, and the Birch Swinnerton--Dyer conjecture, worth $1,000,000 according to some people in this building (Clay Mathematics Institute).

Speaker: Dr Jemima Tabeart (South Wing)

Title: Numerical linear algebra for weather forecasting

Abstract:

The quality of a weather forecast is strongly determined by the accuracy of the initial condition. Data assimilation methods allow us to combine prior forecast information with new measurements in order to obtain the best estimate of the true initial condition. However, many of these approaches require the solution an enormous least-squares problem. In this talk I will discuss some mathematical and computational challenges associated with data assimilation for numerical weather prediction, and show how structure-exploiting numerical linear algebra approaches can lead to theoretical and computational improvements.

16:00

Introducing Entrepreneurship, Commercialisation and Consultancy

Abstract

This session will introduce the opportunities for entrepreneurship and generating commercial impact available to researchers and students across MPLS. Representatives from the Maths Institute and across the university will discuss training and resources to help you begin enterprising and develop your ideas. We will hear from Paul Gass and Dawn Gordon about the support that can be provided by Oxford University Innovation, discussing commercialisation of research findings, consultancy, utilising your expertise and the protection and licensing of Intellectual Property.

Please see below slides from the talk:

20230217 Short Seminar - Maths Fri@4_FINAL- Dept (1)_0.pdf

16:00

Departmental Colloquium

Abstract

What is curiosity? Is it an emotion? A behavior? A cognitive process? Curiosity seems to be an abstract concept—like love, perhaps, or justice—far from the realm of those bits of nature that mathematics can possibly address. However, contrary to intuition, it turns out that the leading theories of curiosity are surprisingly amenable to formalization in the mathematics of network science. In this talk, I will unpack some of those theories, and show how they can be formalized in the mathematics of networks. Then, I will describe relevant data from human behavior and linguistic corpora, and ask which theories that data supports. Throughout, I will make a case for the position that individual and collective curiosity are both network building processes, providing a connective counterpoint to the common acquisitional account of curiosity in humans.

Title: “Mathematical models of curiosity”

Prof. Bassett is the J. Peter Skirkanich Professor at the University of Pennsylvania, with appointments in the Departments of Bioengineering, Electrical & Systems Engineering, Physics & Astronomy, Neurology, and Psychiatry. They are also an external professor of the Santa Fe Institute. Bassett is most well-known for blending neural and systems engineering to identify fundamental mechanisms of cognition and disease in human brain networks.

Statistics' Florence Nightingale Lecture

Abstract

I will discuss a line of work on estimating causal effects from observational data. In the first part of the talk, I will discuss identification and estimation of causal effects when the underlying causal graph is known, using adjustment. In the second part, I will discuss what one can do when the causal graph is unknown. Throughout, examples will be used to illustrate the concepts and no background in causality is assumed.

Title: “Causal learning from observational data”

Please register in advance using the online form: https://www.stats.ox.ac.uk/events/florence-nightingale-lecture-2023

Marloes Henriette Maathuis is a Dutch statistician known for her work on causal inference using graphical models, particularly in high-dimensional data from applications in biology and epidemiology. She is a professor of statistics at ETH Zurich in Switzerland.

16:00

How to give a talk

Abstract

In this session, we will hold a panel discussion on how to best give an academic talk. Among other topics, we will focus on techniques for engaging your audience, for determining the level and technical details of the talk, and for giving both blackboard and slide presentations. The discussion will begin with a directed panel discussion before opening up to questions from the audience.

16:00

Departmental Colloquium

Abstract

The basic question in prime number theory is to try to understand the number of primes in some interesting set of integers. Unfortunately many of the most basic and natural examples are famous open problems which are over 100 years old!

We aim to give an accessible survey of (a selection of) the main results and techniques in prime number theory. In particular we highlight progress on some of these famous problems, as well as a selection of our favourite problems for future progress.

Title: “Prime numbers: Techniques, results and questions”

Maths Meets Stats

Abstract

Matthew Buckland

Branching Interval Partition Diffusions

We construct an interval-partition-valued diffusion from a collection of excursions sampled from the excursion measure of a real-valued diffusion, and we use a spectrally positive Lévy process to order both these excursions and their start times. At any point in time, the interval partition generated is the concatenation of intervals where each excursion alive at that point contributes an interval of size given by its value. Previous work by Forman, Pal, Rizzolo and Winkel considers self-similar interval partition diffusions – and the key aim of this work is to generalise these results by dropping the self-similarity condition. The interval partition can be interpreted as an ordered collection of individuals (intervals) alive that have varying characteristics and generate new intervals during their finite lifetimes, and hence can be viewed as a class of Crump-Mode-Jagers-type processes.

Ofir Gorodetsky

Smooth and rough numbers

We all know and love prime numbers, but what about smooth and rough numbers?

We'll define y-smooth numbers -- numbers whose prime factors are all less than y. We'll explain their application in cryptography, specifically to factorization of integers.

We'll shed light on their density, which is modelled using a peculiar differential equation. This equation appears naturally in probability theory.

We'll also explain the dual notion to smooth numbers, that of y-rough numbers: numbers whose prime factors are all bigger than y, and in some sense generalize primes.

We'll explain their importance in sieve theory. Like smooth numbers, their density has interesting properties and will be surveyed.

Managing your supervisor

Abstract

Your supervisor is the person you will interact with on a scientific level most of all during your studies here. As a result, it is vital that you establish a good working relationship. But how should you do this? In this session we discuss tips and tricks for getting the most out of your supervisions to maximize your success as a researcher. Note that this session will have no faculty in the audience in order to allow people to speak openly about their experiences.

Illustrating Mathematics

Abstract

What should we be thinking about when we're making a diagram for a paper? How do we help it to express the right things? Or make it engaging? What kind of colour palette is appropriate? What software should we use? And how do we make this process as painless as possible? Join Joshua Bull and Christoph Dorn for a lively Fridays@4 session on illustrating mathematics, as they share tips, tricks, and their own personal experiences in bringing mathematics to life via illustrations.